Introduction to Transformer Architecture

Dive deep into the revolutionary architecture that powers GPT, BERT, and modern LLMs. Learn about self-attention, positional encoding, and multi-head attention mechanisms.

Latest Insights

Expert articles, tutorials, and insights on machine learning, AI, and data science.

Dive deep into the revolutionary architecture that powers GPT, BERT, and modern LLMs. Learn about self-attention, positional encoding, and multi-head attention mechanisms.

Dive deep into the revolutionary architecture that powers GPT, BERT, and modern LLMs. Learn about self-attention, positional encoding, and multi-head attention mechanisms.

Master the art of fine-tuning large language models for your specific use cases. Covers LoRA, QLoRA, PEFT techniques, and production deployment strategies.

Explore cutting-edge applications of computer vision in medical imaging, from tumor detection to surgical assistance. Real-world case studies included.

Learn how reinforcement learning algorithms enable robots to learn complex tasks. From simulation to real-world deployment with practical examples.

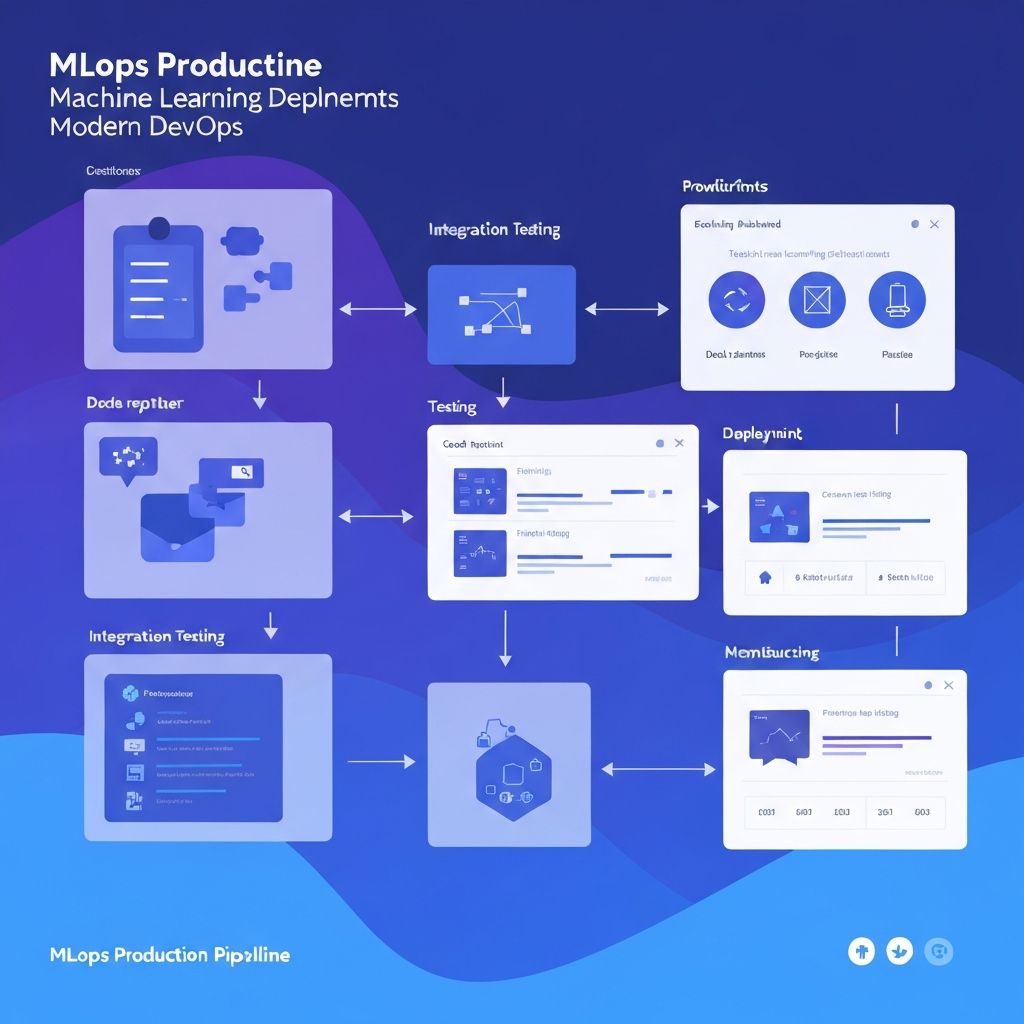

Complete guide to deploying ML models in production. Covers CI/CD pipelines, monitoring, A/B testing, model versioning, and scalability patterns.

Deep dive into optimization algorithms: SGD, Adam, AdamW, and beyond. Understand learning rate scheduling, gradient clipping, and convergence strategies.

Dr. Sarah Chen

A visual guide to understanding how transformers process sequences using self-attention mechanisms.

James Wilson

Complete guide to fine-tuning large language models for your specific use case.

Dr. Emily Park

Learn to implement YOLO for real-time object detection in computer vision applications.

Dr. Alex Kumar

Build a complete neural network from scratch using only Python and NumPy.

Michael Roberts

Apply reinforcement learning algorithms to train AI agents for game environments.

Lisa Thompson

Best practices for deploying machine learning models in production environments.

Dr. Sarah Chen

Deep dive into how self-attention works in transformer models.

James Wilson

Create retrieval-augmented generation systems for enhanced AI responses.